Why You Can’t Tell Deepfake from Reality

Neil DuPaul

Reading time: 4 minutes

Deepfakes are out of control. That’s not a bias. According to UNESCO, 46% of fraud researchers have encountered them. I recently confirmed the trend for myself after uploading a “professional” image of myself to LinkedIn to announce my upcoming attendance to RSA.

The post reached more than two thousand people. It included a challenge: “Only folks who know the real me will be able to find the ONE THING in this post that is AI generated.” It didn’t exactly go viral, but out of all the reactions, one thing soon became clear. Deepfakes have gotten too good.

Only the host of our podcast “What the Hack?” called out my headshot, which was AI generated because I couldn’t make the in-person shoot.

When the world turned fake

I remember when you would see a professional headshot on LinkedIn and know that person put in a lot of effort. Maybe even paid a professional photographer. Now, that’s not the case.

What changed? You can ask an LLM to clean you up: no groomer, no studio, no nothing.

AI image generators are no longer the novelty that let us churn out dogs with two tails and hands with seven fingers. The tech advanced faster than anyone thought possible.

Canva and a flotilla of others let you upload a selfie and turn it into your professional headshot with a couple clicks. Because our images are “out there,” anyone can create a convincing image of you.

And if that doesn’t scare you, it should.

Deepfakes and impersonation

Images are just the beginning of the deepfake problem. Anyone who has your picture and a three-second recording of your voice can impersonate you on the phone, via email, or even in a video call, and most people won’t notice the difference.

Cybercriminals know that. It’s why AI-enabled cybercrime surged by 89 percent and cost Americans billions last year.

We’re not completely cooked yet though, right? Look up “how to know if something is an AI image” and you’ll see lots of tells that read like a catalog at the Smithsonian Museum of week-old tech. They include gibberish lettering, overlapping objects, blurred edges, fused teeth, distorted hands. And I could add more to that list.

None of those were present in my deepfake.

Fortunately, it wasn’t completely undetectable. At least one colleague knew. You see what that says about the attack vector, right?

Why impersonation works

The reality is that a cybercriminal who wants to impersonate you needs more than just your picture. What puts you at risk isn’t just one piece of information. It’s multiple pieces, pulled together in a way that’s far too frictionless in today’s world.

All it takes is a Google search and a quick look at profiles on sites like Spokeo and Whitepages.

Add back some friction.

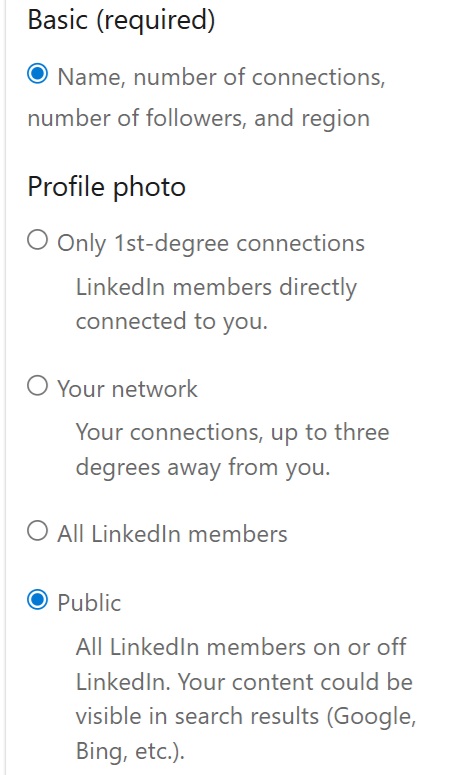

Make it hard to find a good photo of you. If you need a professional headshot on LinkedIn, limit it to connections by clicking your profile picture, going to Settings & Privacy > Visibility > Edit Your Public Profile. On the left side of your screen, you’ll see the options pictured below. Limit your profile photo to only 1st-degree connections, or at the very least to LinkedIn members so it won’t show up in a regular Google search.

Turn your other social media profiles private. Opt out from data broker sites or let a privacy solution do it for you.

The important thing is to make it a little tougher for the cybercriminals, scammers, and impersonators so it’s not quite so easy to take over your whole identity in minutes.

Final thoughts

RSA is coming up fast, and I’m sure deepfakes will be a topic of conversation. Let’s make sure that the risk posed by data broker sites and exposed personal information don’t get lost in the noise.

See you there.

Learn more

- See where your data is exposed with our free scan.

- Cut your digital footprint with these five-minute privacy tips.

- Read our 2026 data privacy predictions for consumers.

Our privacy advisors:

- Continuously find and remove your sensitive data online

- Stop companies from selling your data – all year long

- Have removed 35M+ records

of personal data from the web

Save 10% on any individual and

family privacy plan

with code: BLOG10

news?

Don’t have the time?

DeleteMe is our premium privacy service that removes you from more than 750 data brokers like Whitepages, Spokeo, BeenVerified, plus many more.

Save 10% on DeleteMe when you use the code BLOG10.